What is Virtual

Staining?

Virtual staining is a computational approach that replaces the chemical staining step in histoanalytical workflows with digital, AI-generated histologic stains. This approach preserves tissue, reduces variability, and supports human interpretation without autonomous diagnosis.

Virtual staining represents the next architectural shift in histologic workflows, from chemical contrast to computational contrast. This approach enables reproducible digital stains without chemical reagents, allowing tissue to remain intact for additional testing while supporting scalable digital pathology workflows.

Explore Pictor's Virtual StainsHow it Works

Compare virtual vs. chemical staining →

Capture

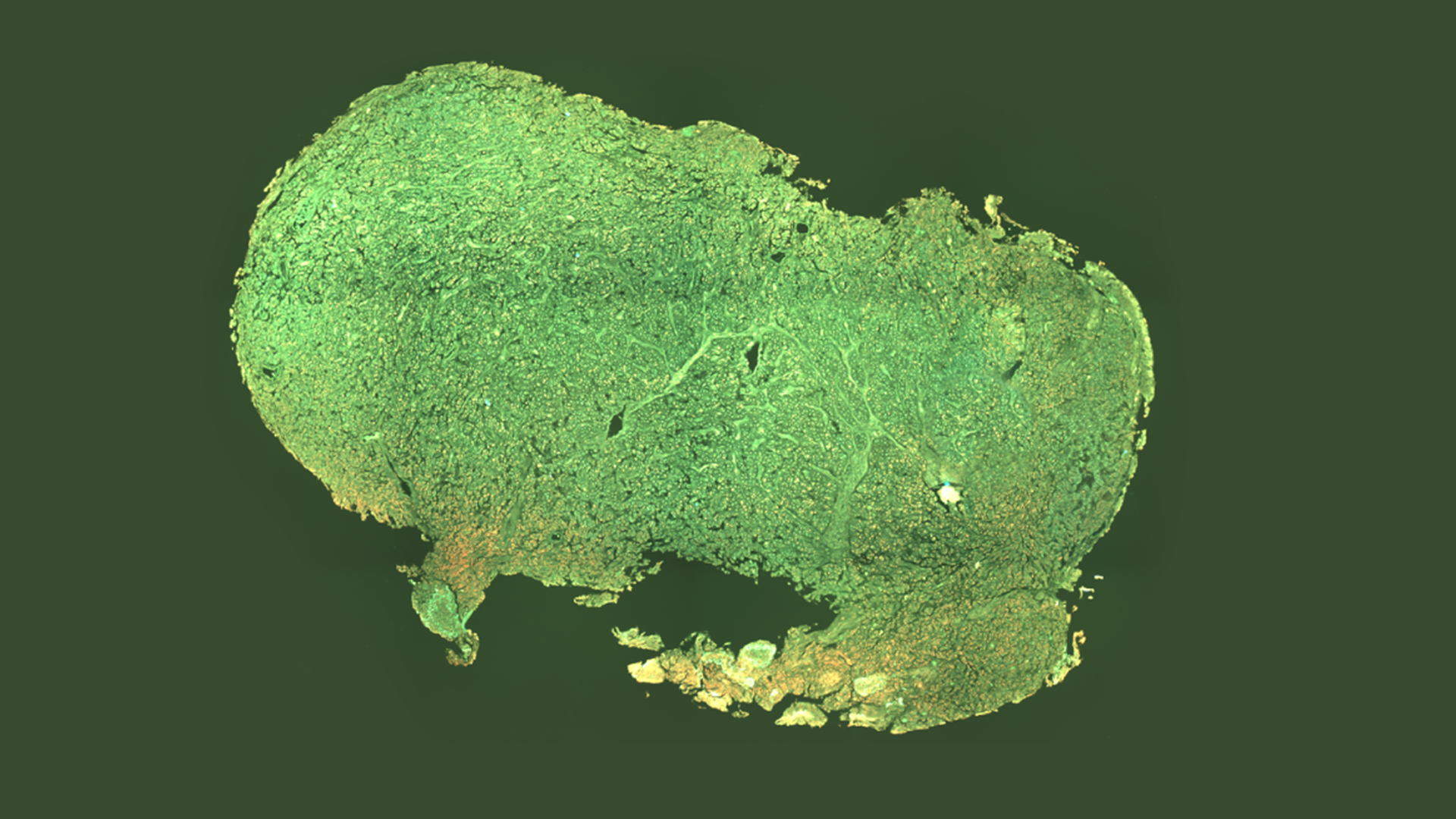

Endogenous fluorophores are naturally occurring molecules within tissue that emit fluorescent signals when excited by specific wavelengths of light. These signals provide intrinsic structural contrast without the need for chemical dyes. Pictor Labs captures this autofluorescence signal to generate high-resolution images of unlabeled tissue samples.

AI Registration & Mapping

The captured autofluorescence image is processed through a deep learning model trained on paired, unstained and conventionally stained tissue samples. Through image registration and feature mapping, the model learns the relationship between intrinsic tissue signals and their corresponding histologic appearance.

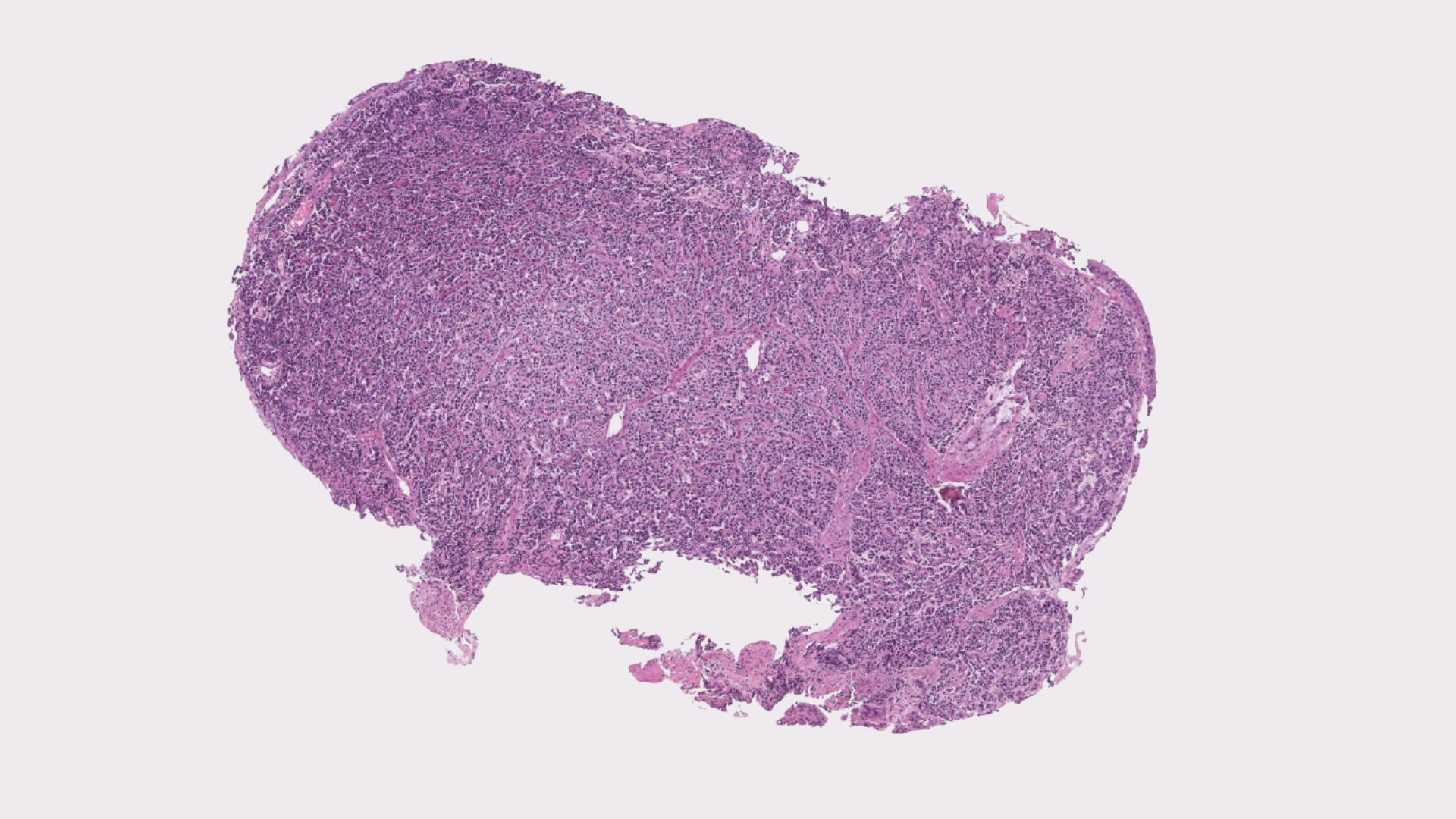

Virtual Stain Generation

During model training, autofluorescence images are co-registered with matching brightfield images obtained after conventional staining. This alignment enables the model to learn how unstained tissue features correspond to traditional histologic stains such as H&E or IHC. During inference, the trained model applies this learned transformation to new, unstained tissue images, generating virtual stains that visually replicate conventional microscopy. Because tissue is not chemically altered, multiple computational stain transformations can be applied to the same sample.

Virtual staining emerged at the intersection of three structural pressures in pathology:

- Increasing molecular and tissue-preservation requirements

- Global chemical reagent supply instability

- The digitization of diagnostic workflows for improved storage and efficiency

As pathology transitions toward AI-native infrastructure, computational staining offers a scalable alternative to chemistry-dependent contrast generation.

Early adopters are typically institutions pursuing digitization-first or tissue-preserving workflows, including:

- Molecular pathology labs

- Digitization-first hospital systems

- Research institutions

- High-throughput reference laboratories

- Post-processing color normalization

- Synthetic image hallucination

- Autonomous diagnostic AI

- Replacement for pathologist interpretation

Virtual staining builds upon advances in image-to-image translation, convolutional neural networks, and generative modeling demonstrated across multiple peer-reviewed studies in digital pathology.

Safe AI in Pathology →